Void tcp_set_state(struct sock *sk, int state) ĭiff -git a/net/core/dev.c b/net/core/dev.c Int tcp_filter(struct sock *sk, struct sk_buff *skb) Replace the sk_buff_head with a single-linked list (Jakub)Īdd a READ_ONCE()/WRITE_ONCE() for the lockless read of sd->defer_list Kernel profiles on cpu running user thread recvmsg() looks better:Ĩ1.35% copy_user_enhanced_fast_stringġ.27% _netif_receive_skb_coreĠ.44% _raw_spin_lock_irqsaveĠ.37% native_irq_return_iretĠ.33% _inet6_lookup_establishedĠ.31% ip6_protocol_deliver_rcuĭo not defer if (alloc_cpu = smp_processor_id()) (Paolo) (*) 1.59% free_unref_page_commitĠ.54% flush_smp_call_function_queue Kernel profiles on cpu running user thread recvmsg() show high cost forĥ7.81% copy_user_enhanced_fast_string These conditions can happen in more general production workload. Note that this tuning was only done to demonstrate worseĬonditions for skb freeing for this particular test. Page recycling strategy used by NIC driver (its page pool capacityīeing too small compared to number of skbs/pages held in sockets Tested on a pair of hosts with 100Gbit NIC, RFS enabled,Īnd /proc/sys/net/ipv4/tcp_rmem tuned to 16MB to work around If we see any contention on sd->defer_lock. Note that we can add in the future a small per-cpu cache This is because skbs in this list have no requirement on how fast Run net_action_rx() handler fast enough, we use an IPI to raiseĪlso, we do not bother draining the per-cpu list from dev_cpu_dead()

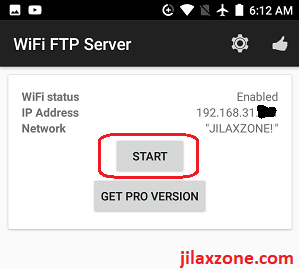

In the (unlikely) cases where the cpu does not In normal conditions, skbs are added to the per-cpu list with This new per-cpu list is drained at the end of net_action_rx(),Īfter incoming packets have been processed, to lower latencies. Instead uses a per-cpu list, which will hold more skbs per round. This patch removes the per socket anchor (sk->defer_list) and On the cpu that originally allocated them. Ideally, it is better to free the skbs (and associated page frags) This issue is more visibleįor RFS, even if BH handler picks the skbs, they are still pickedįrom the cpu on which user thread is running. Skb payload has been consumed, meaning that BH handler has no chance Lock is released") helped bulk TCP flows to move the cost of skbsįrees outside of critical section where socket lock was held.īut for RPC traffic, or hosts with RFS enabled, the solution is far fromįor RPC traffic, recvmsg() has to return to user space right after Logic added in commit f35f821935d8 ("tcp: defer skb freeing after socket ` (4 more replies) 0 siblings, 5 replies 18+ messages in thread MiXplorer's developer gives the app away for free on the XDA Developer forums, but if you'd like to support the development of MiXplorer you can get the paid version on the Play Store. Net: generalize skb freeing deferral to per-cpu lists All of help / color / mirror / Atom feed * net: generalize skb freeing deferral to per-cpu lists 20:12 Eric Dumazet To start an FTP server, tap on the menu at the top-right, then tap on Servers, followed by Start FTP Server.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed